I had 8 MCP servers in Python and kept wondering if I should rewrite them in Rust. Instead of guessing, I built the same server in 5 languages and measured.

This is not a benchmark of the MCP protocol. It’s a benchmark of realistic MCP server implementations: framework overhead, JSON handling, and server-side processing patterns. If your MCP server is a thin wrapper over an upstream API, then yes, this is mostly an HTTP server benchmark. That’s the point. I wanted to measure whether rewriting those wrappers into a faster language would change anything for the patterns I actually run.

For most proxy-style MCP servers, the language barely matters. For servers that do real work on the data before returning it, the JSON library you pick changes performance more than the language. I didn’t end up rewriting anything.

What I tested

Five frameworks, same 6 tools, same backend, same benchmark client. All running over Streamable HTTP transport (not SSE, not stdio).

- Python 3.13 with FastMCP + orjson

- Rust 1.94 with rmcp + serde typed structs

- Go 1.25 with mcp-go + bytedance/sonic

- TypeScript (Node 24) with the official MCP SDK

- C# (.NET 10) with the official C# SDK + System.Text.Json source generators

Two types of tools. Four proxy tools that forward a request to a shared backend API and return the response. Two compute tools that deserialize large payloads (4MB JSON, 2MB text), filter, aggregate, and return a summary. The proxy tools measure framework overhead. The compute tools measure what happens when the MCP server does real work on the data before returning it.

1000 requests per proxy tool, 200 per compute tool, 3 runs, median selected. All sequential, one request at a time, which matches how agents use MCP: send a tool call, wait for the result, then decide what to do next. Backend serves pre-generated fixtures from memory with zero computation per request so it’s not the bottleneck.

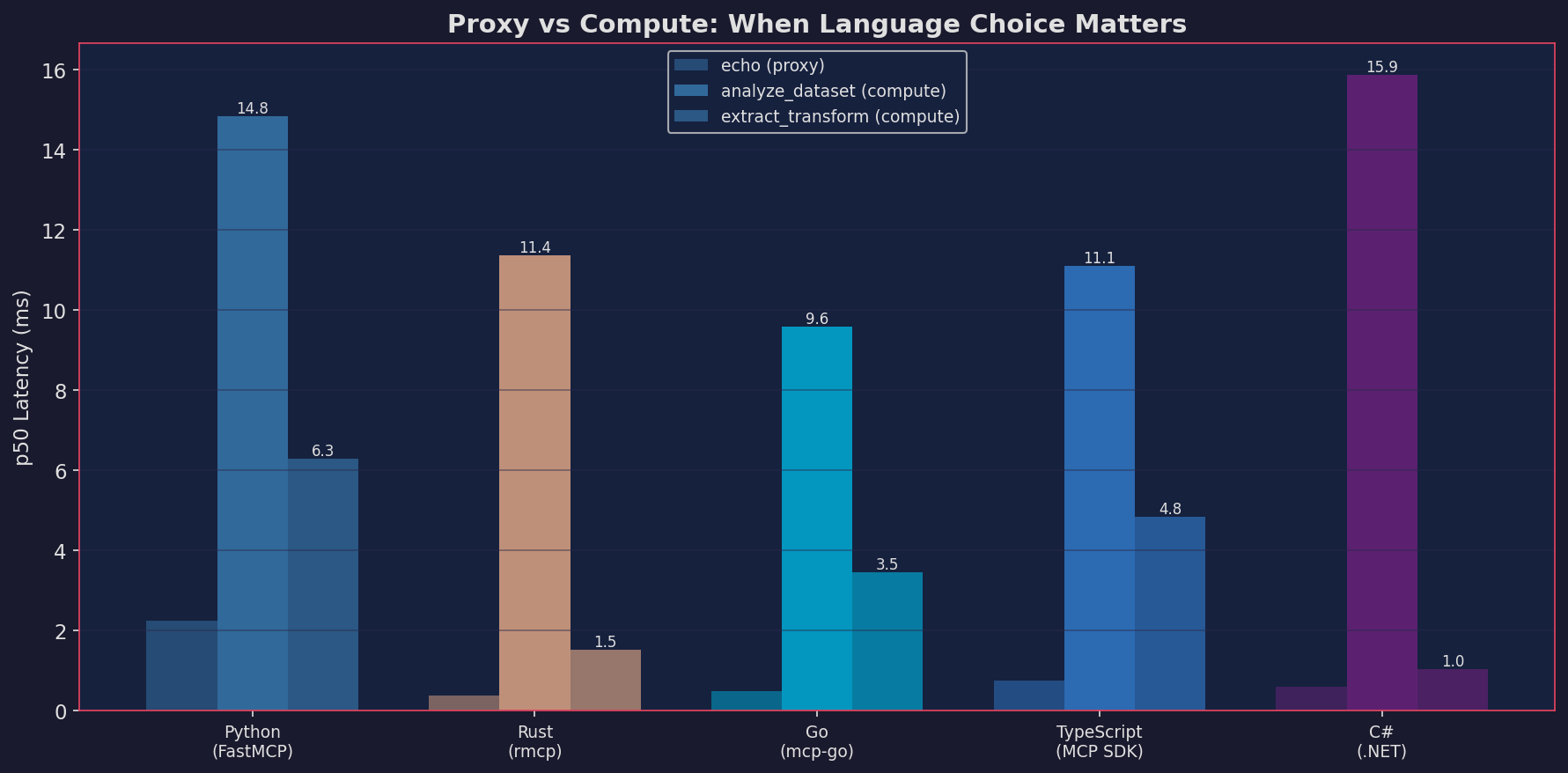

Proxy: the language is not the bottleneck

The echo tool (receive a request, call the backend, return the response) is the clearest test of pure framework overhead. Rust handles it in 0.38ms. Python takes 2.25ms. That’s a 6x difference.

In the deployments I care about, that difference is usually drowned out by network latency. The hop between MCP client and server is typically 1-10ms. The hop between the server and the upstream API is 50-500ms. A 2ms framework tax between two network hops of 10ms and 200ms doesn’t show up.

In this setup, framework overhead stayed under 3ms in every language. Once real network is involved, the differences mostly disappear. If your MCP server translates requests to an upstream API and passes the response back, write it in whatever you ship fastest.

Compute: when you process data server-side

Most MCP servers just proxy. But some need to do more. The upstream API returns raw data that isn’t shaped for the agent, or returns too much data to dump into context. The server pulls the data, filters, aggregates, and returns a summary. I wrote about this pattern in Your MCP server is not an API adapter. The part that changes the result is when the server stops proxying and starts shaping, filtering, or aggregating data before returning it.

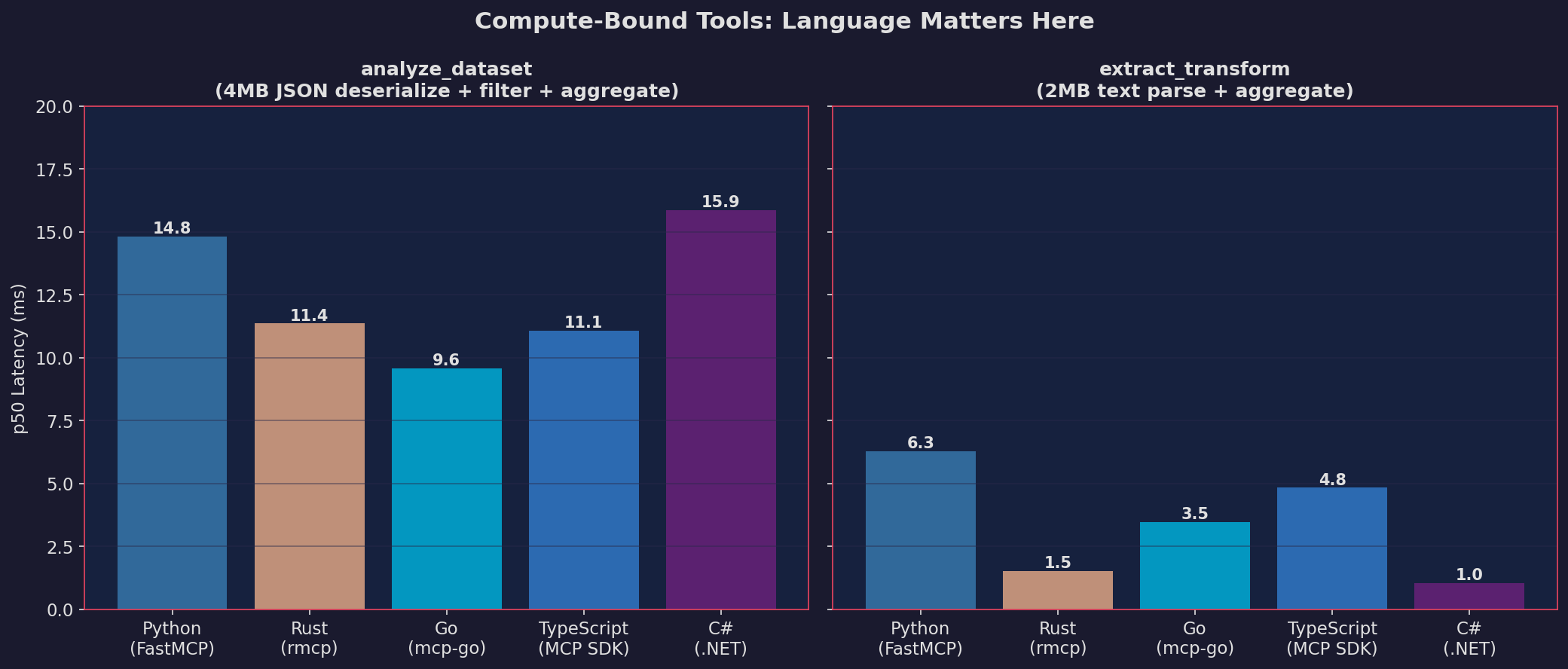

Even in these cases, the differences are smaller than I expected. For JSON deserialization + filtering + aggregation (4MB input, 10K records):

| Python | Rust | Go | TypeScript | C# | |

|---|---|---|---|---|---|

| p50 (ms) | 14.8 | 11.4 | 9.6 | 11.1 | 15.9 |

For text parsing + aggregation (2MB input, 15K log lines):

| Python | Rust | Go | TypeScript | C# | |

|---|---|---|---|---|---|

| p50 (ms) | 6.3 | 1.5 | 3.5 | 4.8 | 1.0 |

Go won the JSON benchmark in this setup, likely helped by sonic’s SIMD path on ARM64. C# won text parsing, where ReadOnlySpan<char> kept allocations down. Rust is consistently top-2 without winning either category. TypeScript’s V8 JSON.parse is competitive with compiled languages on JSON. Python with orjson is 1.5x slower than the best, not 10x.

Even for compute-heavy tools, the spread between fastest and slowest is 15.9ms vs 9.6ms for JSON, 6.3ms vs 1.0ms for text. For a tool that runs once per agent turn, that’s the difference between “fast” and “slightly faster.” It would take a very specific use case, something like processing data on every tool call in a tight loop, for this to justify a rewrite from Python to a compiled language.

JSON library matters more than language

Before I optimized each server, the results looked completely different.

Go with encoding/json (stdlib, uses reflection): 43ms. Same Go with bytedance/sonic (SIMD-accelerated): 9.6ms. One import changed, 4.5x faster.

Rust with serde_json::Value (untyped, heap-allocates every field): 30ms. Rust was slower than Python. Switching to typed structs with #[derive(Deserialize)] dropped it to 11.4ms. The untyped version allocated 150K+ individual objects for 10K records.

Python with json.loads: 21ms. Python with orjson: 14.8ms.

Default JSON handling in every language I tested is slow for large payloads. The optimized library in the same language often gives a bigger improvement than switching to a faster language with default settings. If you’re doing compute in an MCP server and you haven’t checked which JSON library you’re using, start there.

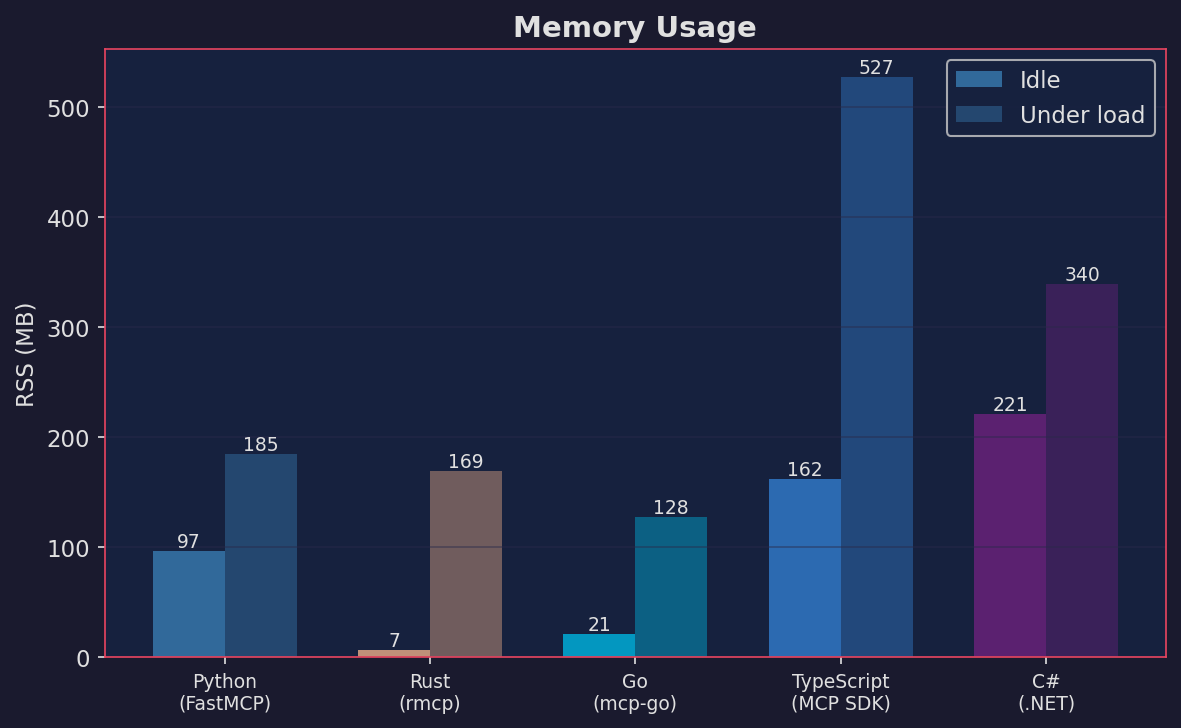

Memory

Idle RSS: Rust 7MB, Go 21MB, Python 97MB, TypeScript 162MB, C# 221MB.

Under load: Go 128MB, Rust 169MB, Python 185MB, C# 340MB, TypeScript 527MB.

TypeScript’s number is partly SDK design: the official MCP SDK creates a new server instance per HTTP request in stateless mode. C# defaulted to 2.1GB before I switched from Server GC to Workstation GC (the default pre-allocates large heap segments per CPU core, which is overkill for an MCP server handling one request at a time).

If you’re running many MCP servers on one machine, Rust and Go give you headroom. Otherwise this probably doesn’t drive the decision.

What this doesn’t cover

All tests ran on localhost, no TLS, no auth middleware, single client. Real deployments add network variability, TLS termination, and auth overhead that would dwarf the framework differences measured here. I only tested Streamable HTTP transport, not stdio or SSE. The compute tools are synthetic workloads designed to stress JSON and text processing, not a replay of real production traffic. Throughput is sequential (one request at a time), not concurrent.

What I did after

I kept everything in Python. The benchmark confirmed what I suspected but hadn’t measured: for the proxy-heavy servers I run, network latency dominates framework overhead. For the rare server that does heavy client-side processing, Go and Rust are faster, but not by enough to justify learning a new language or asking Claude to generate MCP servers in languages I don’t know just to shave off a few milliseconds.

I did check the JSON library in every Python server after this. Swapping from stdlib json to orjson gave me a 30% improvement with a one-line change. That was the real win.

Full benchmark code and raw data: mcp-benchmark on GitHub.